This is Part IV of my series on multi-agent AI architecture. Part I covered centralized orchestration. Part II covered swarm intelligence. Part III covered the horizontal scaling problem. This post looks at the communication topology underneath all of them.

Multi-agent AI systems are often described as networks of specialized agents working toward a shared goal.

One agent plans. Another searches. Another writes code. Another validates. Another interacts with tools. Another supervises the run.

At small scale, that sounds clean.

The problem appears when every agent can talk directly to every other agent. Underneath the architecture sits an old counting problem:

How many communication channels exist in a fully connected group?

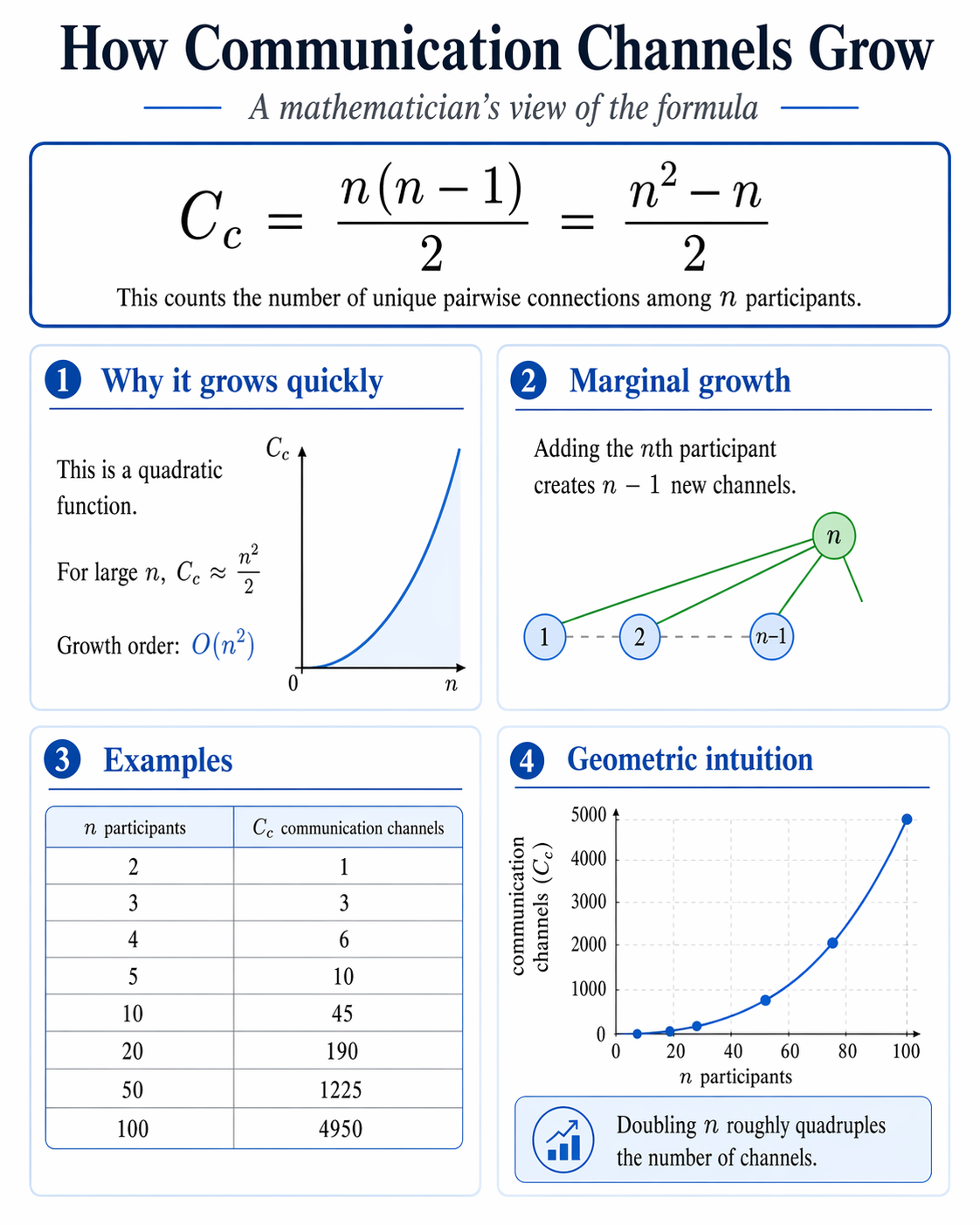

$$ C_c = \frac{n(n-1)}{2} $$Where:

- $C_c$ is the number of communication channels

- $n$ is the number of agents, systems, teams, or participants

That formula counts unique pairwise connections among $n$ participants. It shows up in graph theory, network design, team coordination, and distributed systems. It also applies to agentic AI.

If every agent can talk to every other agent, communication complexity grows quadratically with the number of agents, not linearly.

The counting problem

The same formula can be written as:

$$ C_c = \frac{n^2 - n}{2} $$For large values of $n$, the dominant term is $n^2$, so the growth rate is:

$$ O(n^2) $$That matters because each new agent adds a path to every agent already in the system, not a single new path overall.

Adding the $n$th agent creates:

$$ n - 1 $$new possible channels.

The marginal coordination cost rises with the size of the system.

Here is the simple count:

| Number of agents | Direct communication channels |

|---|---|

| 2 | 1 |

| 3 | 3 |

| 4 | 6 |

| 5 | 10 |

| 10 | 45 |

| 20 | 190 |

| 50 | 1,225 |

| 100 | 4,950 |

| 1,000 | 499,500 |

This is the same reason communication breaks down in large teams, microservice meshes, and distributed control systems. The AI version is not exempt from the math.

Why this matters for agentic AI

In a multi-agent system, a communication channel is rarely just a network path or API call.

It can also be:

- a message route

- a context-sharing path

- a delegation edge

- a tool invocation dependency

- a state synchronization path

- a trust boundary

- an audit path

- a retry path

- an authorization relationship

Once agents are allowed to exchange context, trigger actions, or call tools on each other’s behalf, the number of possible interactions becomes an operational problem rather than a graph-theoretic one.

At that point the real questions are architectural:

- Who is allowed to talk to whom?

- Which agent owns the source of truth?

- Which agent can make decisions?

- Which agent can call external tools?

- Which messages are trusted?

- Which state is canonical?

- Which actions are reversible?

- Which outputs are audited?

That is where system design starts to matter more than model capability.

A super agent changes the topology

One way to cut this complexity is to introduce a super agent: an orchestrator, supervisor, coordinator, or controller.

Instead of allowing every worker to talk directly to every other worker, the communication pattern becomes structured. Worker agents hand results upward, receive assignments downward, and interact through a coordinating layer.

In a fully connected system:

$$ C_c = \frac{n(n-1)}{2} $$In a simple hub-and-spoke system, the number of direct communication paths is closer to:

$$ C_c \approx n $$The first grows as:

$$ O(n^2) $$The second grows as:

$$ O(n) $$That is the gap between a topology that becomes dense very quickly and one that remains tractable as the system grows.

This is why orchestration matters. A super agent is a way to control coordination cost.

The trade-off

The orchestrator pattern is not free.

A super agent can become a bottleneck, a single point of failure, a latency amplifier, and the place where trust, policy, and context all concentrate. If the coordinator is wrong, overloaded, or poorly instrumented, the whole system pays for it.

So the goal is not to put one boss agent above everything. The goal is to choose the right topology for the problem.

Sometimes the right design is hierarchical. Sometimes it is event-driven. Sometimes it uses shared state. Sometimes strict role-based delegation is enough. And sometimes a small fully connected group is perfectly reasonable.

The mistake is assuming that more agent-to-agent communication automatically means more intelligence. In practice it often means more coordination cost, more failure modes, and more ambiguity about authority.

The architectural lesson

Agentic AI is a systems problem as much as a model problem.

The shape of the agent graph matters as much as the capability of any individual agent.

A group of strong agents with poor topology can produce fragile, inconsistent, expensive, and hard-to-audit behavior. A group of simpler agents with clear boundaries, structured coordination, and well-defined authority can be much more reliable.

Software engineers have seen this before:

- microservices

- distributed systems

- event-driven architectures

- organizational design

- API ecosystems

- large engineering teams

The topology matters. Authority boundaries matter. Observability matters. Failure handling matters.

The same is true for AI agents.

Three points worth keeping in view

Agent count is not system capability

Adding more agents does not automatically make a system more intelligent. It may increase specialization, but it also increases coordination cost. Past a certain point, communication overhead becomes the dominant limit rather than model quality.

Fully connected agent networks grow quadratically

If every agent can talk to every other agent, the number of possible communication channels is:

$$ \frac{n(n-1)}{2} $$Doubling the agent count roughly quadruples the number of possible direct interactions. That is why unrestricted agent-to-agent communication becomes hard to reason about.

Scalable agent systems need structure

The next generation of agentic AI systems will not come from simply wiring together more agents. It will come from better architecture:

- orchestrators

- supervisors

- message buses

- shared memory

- role-based permissions

- tool boundaries

- audit trails

- state ownership

- validation layers

- recovery mechanisms

The intelligence of a multi-agent system depends on the agents, but it also depends on the structure connecting them.

Closing thought

A multi-agent system is a communication system. Communication systems have mathematics, and the math does not stay polite as the node count rises.

$$ C_c = \frac{n(n-1)}{2} $$is just a counting formula, but it is enough to expose the design risk.

Fully connected multi-agent systems do not scale gracefully. When every agent can talk to every other agent, the number of possible interactions grows much faster than the number of agents.

That affects latency, cost, auditability, debugging, safety, context management, security boundaries, tool permissions, state consistency, and recovery.

Once those channels can trigger tools, change state, or make business decisions, they become control paths.

Control paths need governance.