1. Architectural foundation: the Q-learning paradigm

Q-learning represents a paradigm shift in decision-support architecture, moving beyond the static pattern recognition of traditional machine learning toward a dynamic, sequential decision-making framework. While standard predictive models excel at classifying historical data, Q-learning establishes an autonomous agent designed to achieve goal-oriented behavior through direct environmental interaction. This transition from prediction to action allows organizations to deploy systems that do not merely forecast outcomes but actively navigate complex processes to maximize long-term utility.

The technical core of this framework is the Q-value: an estimate of the cumulative long-term return expected from taking a specific action in a given state and following an optimal policy thereafter. The learning mechanism is fundamentally iterative. The agent typically begins with zero-initialized values and refines them through a continuous cycle of trial, feedback, and consequence. When an action yields a reward, the update rule shifts the current Q-value estimate toward that realized return. Through repeated transitions, the agent’s internal model converges toward the true expected return, mapping out a high-fidelity strategy for navigating the environment.

From a strategic standpoint, the advantage of learning from consequences is paramount. Unlike supervised learning, which requires massive, pre-labeled datasets that are often expensive or impossible to acquire, Q-learning discovers optimal strategies autonomously. That makes it a strong candidate for business problems where the correct answer is unknown and must be discovered through exploration. However, while the theoretical promise is significant, the transition to a production-ready system is governed by rigid architectural constraints that dictate feasibility.

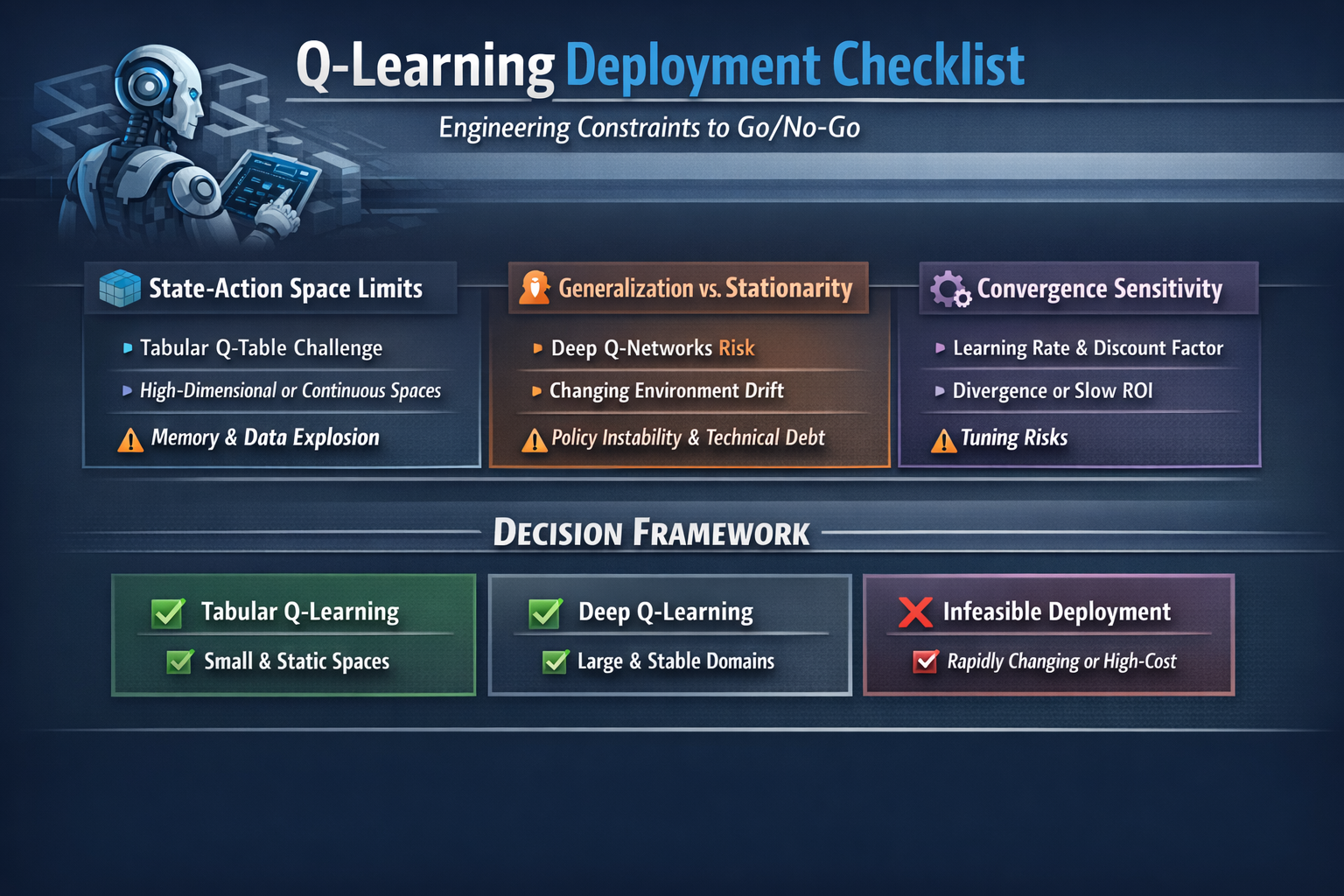

2. Scalability analysis: the state–action space threshold

The viability of a Q-learning deployment is primarily determined by the state–action space explosion: the relationship between environmental complexity and the computational resources required to represent it. In a reinforcement learning context, the dimensions of the problem dictate whether a standard tabular approach can achieve a functional return on investment (ROI).

Tabular Q-learning requires the agent to maintain a literal lookup table containing every possible state–action pair. As the number of variables increases, the memory footprint and the data-collection requirements grow exponentially, leading to the curse of dimensionality.

| Environment attribute | Tabular feasibility | Generalization capability | Infrastructure impact |

|---|---|---|---|

| Small / discrete spaces | High: ideal for well-defined, finite logic. | None: atomic lookups only; every state is new. | Minimal memory and compute overhead. |

| Large / continuous spaces | Low: computationally and logically infeasible. | None: no ability to infer value for unseen states. | Exponential state-space growth. |

Beyond the state space, a critical feasibility hurdle lies in continuous action spaces. While continuous states are difficult, continuous actions require the agent to compute an argmax over an infinite set of possibilities, making standard Q-learning effectively a no-go without heavy discretization or an actor–critic architecture. The organizational “so what” is data-collection cost: in a high-dimensional space, the system may require millions of trials before it stops making costly mistakes in production. Without generalization, time-to-value is often delayed beyond the window of project viability, because the agent must visit every cell in its memory before it becomes reliable.

3. Input data evaluation: high dimensionality and function approximation

The format of organizational data—ranging from structured discrete values to high-dimensional visual streams—is a primary determinant of the required architecture. Tabular methods fail in many modern enterprise environments because they treat every unique configuration as a completely novel state, lacking the ability to identify similarities between nearly identical inputs.

To address this, we move to deep Q-networks (DQN), using function approximation as the technological bridge. Instead of a lookup table, a neural network acts as a regressor to estimate Q-values. Architecturally, this is significant because the network treats states as vectors of features rather than atomic identifiers. That allows interpolation: the agent can infer the value of a state it has never encountered based on its similarity to known feature patterns.

While deep variants provide the power to process raw images and complex sensory data, they introduce significant engineering complexity. Shifting to deep variants increases inference latency and necessitates more robust retraining pipelines. The move from recording results to approximating them means the system is now susceptible to the instabilities of neural network training, requiring a much higher level of oversight throughout the development lifecycle to ensure that predicted rewards align with physical or economic reality.

4. Environmental dynamics and policy stability

A foundational assumption in reinforcement learning is stationarity: the requirement that the environment’s transition rules and reward structures remain relatively constant. For a decision-support system to be mission-critical, the objective it is optimizing must be stable enough for the policy to converge.

In the real world, organizational dynamics are frequently non-stationary. If market conditions, consumer behavior, or operational rules shift, the algorithm finds itself chasing a moving target. When the underlying logic of the environment drifts, previously learned Q-values become legacy technical debt, potentially leading to a total collapse of the decision policy.

The strategic risk here is a combination of model drift and poor exploration. If the environment is volatile, the agent may never spend enough time in a stable regime to identify a useful policy. In a live business context, this produces brittle systems that yield inconsistent or suboptimal decisions. A policy that was optimal yesterday can become catastrophic today if reward signals have shifted, necessitating continuous monitoring and a retraining infrastructure to maintain operational stability.

5. Technical risk assessment: convergence and hyperparameters

Reinforcement learning systems are notoriously sensitive to technical configuration. Hyperparameters such as the learning rate (how aggressively new information replaces old estimates) and the discount factor (the valuation of future versus immediate rewards) serve as the steering mechanisms for the entire architecture.

Poor hyperparameter selection leads to critical operational failures:

- Slow convergence: The agent consumes massive compute resources without reaching a functional policy, turning the project into a sunk cost.

- Divergence: The learning process fails entirely, with Q-values fluctuating wildly and the policy never settling.

The most dangerous outcome is a silent failure: a system appears to be learning but is actually converging on a brittle, narrow policy that fails when faced with minor environmental stochasticity. That lack of robust convergence does not merely delay ROI; it introduces the risk of deploying a system that performs well in a simulator but breaks in the field, leading to unpredictable behavior in production.

6. Final decision matrix: tabular vs. deep Q-learning

To facilitate the go/no-go decision, stakeholders should evaluate the project against the following technical feasibility indicators.

Candidate for tabular Q-learning

- Environments with small, discrete state and action spaces.

- Highly stationary dynamics with static rules.

- Low-dimensional, structured data inputs.

Candidate for deep Q-learning / advanced variants

- High-complexity environments (large or continuous state spaces).

- Visual or high-dimensional sensory inputs.

- Use cases requiring generalization across similar but non-identical scenarios.

Infeasible scenarios

- Highly non-stationary dynamics where rules change faster than the agent can learn.

- Extreme exploration sensitivity: If the cost of a trial-and-error failure includes damaged physical assets, lost customers, or regulatory breaches, standard Q-learning is a no-go without a high-fidelity simulator.

Q-learning remains a vital mental model for understanding how systems learn from consequences. However, the move to production requires a rigorous upfront assessment of the state–action space and the cost of exploration. By addressing these architectural constraints early, organizations can mitigate technical debt and ensure that their decision systems provide a stable, long-term competitive advantage.

Beyond prediction: what the trial and error of Q-learning teaches us about intelligence

Most of our modern encounters with artificial intelligence are essentially transactional. We provide a prompt, and the system predicts the next word; we upload a photo, and the algorithm classifies the image. This is the world of supervised learning: a powerful, high-speed form of pattern recognition that maps static inputs to labeled outputs. While impressive, this prediction-first view of AI misses a vital dimension of true intelligence: the capacity for agency.

The most profound leap in machine learning occurs when we move from systems that merely know to systems that act. Intelligence, in its most naturalistic form, is not about memorizing a static map of the world; it is about navigating an environment and learning from the ripples of one’s own choices. This is the domain of Q-learning, a foundational reinforcement learning method that trades the safety of labeled answers for the messy, iterative reality of learning through consequence.

Takeaway 1: Learning from consequences, not labels

In the traditional AI paradigm, we serve as the system’s omniscient tutor, providing the correct answers via massive, labeled datasets. Q-learning discards this hierarchy, replacing the passive observer with an agent: an active participant locked in a continuous feedback loop with its environment. There are no predetermined labels here, only outcomes.

As defined in classic reinforcement learning theory, the agent learns which action is best in a given state by trying, receiving feedback, and improving over time.

This shift mirrors our own biological development. A child does not learn that a stove is hot because of a linguistic label; they learn through the immediate, visceral consequence of a physical interaction. By prioritizing environmental consequences over prepackaged answers, Q-learning offers a more organic mental model for intelligence—one in which correctness is not a property of the data, but a result of the agent’s goals and the environment’s physics.

Takeaway 2: The incremental logic of the Q-value

At the core of this adaptive behavior is the Q-value: a mathematical estimate of the long-term return an agent can expect by taking a specific action in a specific state. The brilliance of Q-learning, however, lies in its patience. It does not update its worldview based on a single flash of luck; it relies on a cautious, incremental update rule.

Consider a system where all Q-values are initialized at zero. If an agent takes an action that suddenly yields a reward of 5, a primitive system might immediately set that action’s value to 5. Q-learning is more sophisticated: it moves the estimate from 0 toward 5 by a small fraction. This fraction is the learning rate (α), a hyperparameter that dictates how much new information should override the old. This incrementalism is the physical manifestation of caution; it ensures the agent does not overreact to noise or outliers, building a stable, reliable average of experience over time rather than chasing every fleeting signal.

Takeaway 3: The curse of dimensionality and the limits of memory

Despite its conceptual elegance, classic Q-learning is often a victim of its own precision, drowning in the very data it seeks to organize. In its most basic form—tabular Q-learning—the algorithm requires a dedicated entry for every possible state–action pair. Imagine a massive ledger where every potential move in every potential situation is recorded.

As an environment moves from a simple grid to the complexity of the real world, the number of required entries explodes, making the table infeasible to maintain. This is the curse of dimensionality. It is a humbling irony of AI research: a mathematically sound algorithm can be rendered useless by the sheer volume of raw data. This limitation eventually necessitated the move from simple tables to deep Q-networks, where neural networks act as function approximators to estimate values for states the agent has never seen.

Takeaway 4: The high cost of staying safe (the exploration paradox)

A successful Q-learning agent requires strong exploration. If an agent is too conservative, repeating only the paths it already knows to be safe, it risks the tragedy of a suboptimal policy. It becomes an entity that is good enough but never truly great, trapped at a local peak because it was too afraid to descend into the valley of the unknown.

This reveals the inherent cost of intelligence. To eventually identify the best moves, an AI must be willing to make deliberately suboptimal ones. It must risk immediate failure to gather the long-term data necessary to refine its internal Q-values. Exploration is not a distraction from the goal; it is the price of admission for finding a better path. An agent that never risks mediocrity can never achieve mastery.

Takeaway 5: Chasing a moving target

Even with sound exploration, Q-learning remains a delicate balancing act. It is notoriously sensitive to hyperparameters such as the learning rate and the discount factor (which determines how much the agent weighs future rewards versus immediate ones). If the rules of the environment shift—a phenomenon known as non-stationary dynamics—the agent finds itself chasing a moving target, where hard-won knowledge is rendered obsolete by a changing world.

Furthermore, when we move beyond simple tables and use function approximation to handle continuous spaces, the problem can become fundamentally ill-posed. Because the agent is updating its estimates based on other estimates, the logic can become circular and unstable. Without meticulous tuning, the agent’s intelligence can collapse, leading to divergence rather than a stable policy.

Conclusion: The enduring power of the mental model

Despite the challenges of scaling and the sensitivity of its parameters, Q-learning remains the essential bedrock of sequential decision-making. It stripped away the opacity of modern neural networks to reveal the core engine of reinforcement learning: the iterative refinement of value through experience. It is the direct ancestor of the deep Q-networks that conquered Atari games and of the sophisticated algorithms currently powering modern robotics.

As we stand on the precipice of more autonomous and adaptive AI, we might look inward. How much of what we call human expertise—the intuition of a grandmaster or the split-second reaction of a pilot—is simply a high-level version of Q-learning? Perhaps our lives are just a vast, continuous update of our own internal Q-values, refined through a lifetime of trial and error.

References

These sources ground the technical claims above: core definitions, where tabular and value-based methods break down, stability of Q-learning with function approximation, and practical guidance for deep Q-networks in real pipelines.

Q-learning. Wikipedia. Overview of the algorithm, the Bellman backup, and the tabular setting.

Limitations of value-based methods. ApX Machine Learning (intermediate RL course). Why argmax-based value methods struggle with continuous actions and high-dimensional spaces—directly tied to the feasibility discussion in §2–§3.

Is Q-learning an ill-posed problem?. arXiv (HTML version). Formal perspective on instability when Q-learning uses function approximation and bootstraps from its own estimates—supporting the cautions in §3 and Takeaway 5.

Practical tips for training deep Q networks. Anyscale. Engineering-focused notes on hyperparameters, training stability, and operational pitfalls when moving from tabular Q-learning to DQNs—aligned with §5 and production readiness.

Three fundamental flaws in common reinforcement learning algorithms (and how to fix them). Towards Data Science. Accessible treatment of exploration, instability, and related failure modes in widely used RL methods, complementing the risk framing in §4–§6.